VST Teams

AI-powered video and media analysis — now a full collaborative investigation platform.

Overview

Investigations increasingly depend on large volumes of digital media: video recordings, images, audio, chat logs, and transcripts. Manual review does not scale, and a single analyst cannot triage a seizure alone.

VST Teams is a collaborative investigation platform. Multiple analysts work the same case concurrently, with named sessions, attributed bookmarks, and aggregated triage across folders. It reduces time-to-evidence while maintaining evidential integrity.

The platform is deployed on-premise, air-gapped, or in your agency’s cloud. It is already in operational use.

See It in Action

Watch a brief demonstration of the platform’s core capabilities:

What the Platform Does

The platform ingests raw media and produces structured outputs that investigators can search, review, and reference.

It supports:

- Video evidence from seized devices, body-worn cameras, and online sources

- Still images processed alongside video in a unified gallery

- Audio and text evidence, including multilingual material

- Folder-level aggregation for triage across large seizures

The output is an investigative workspace, not a black-box result.

Core Capabilities

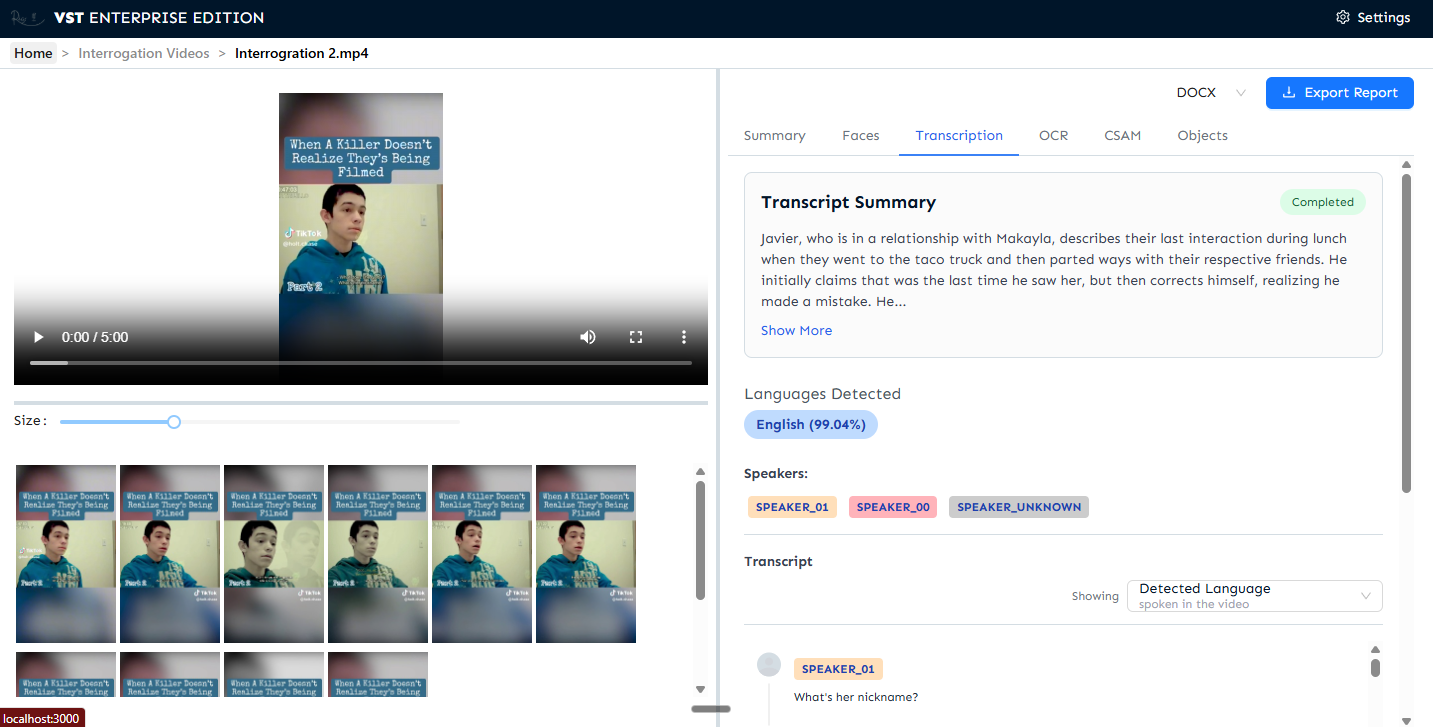

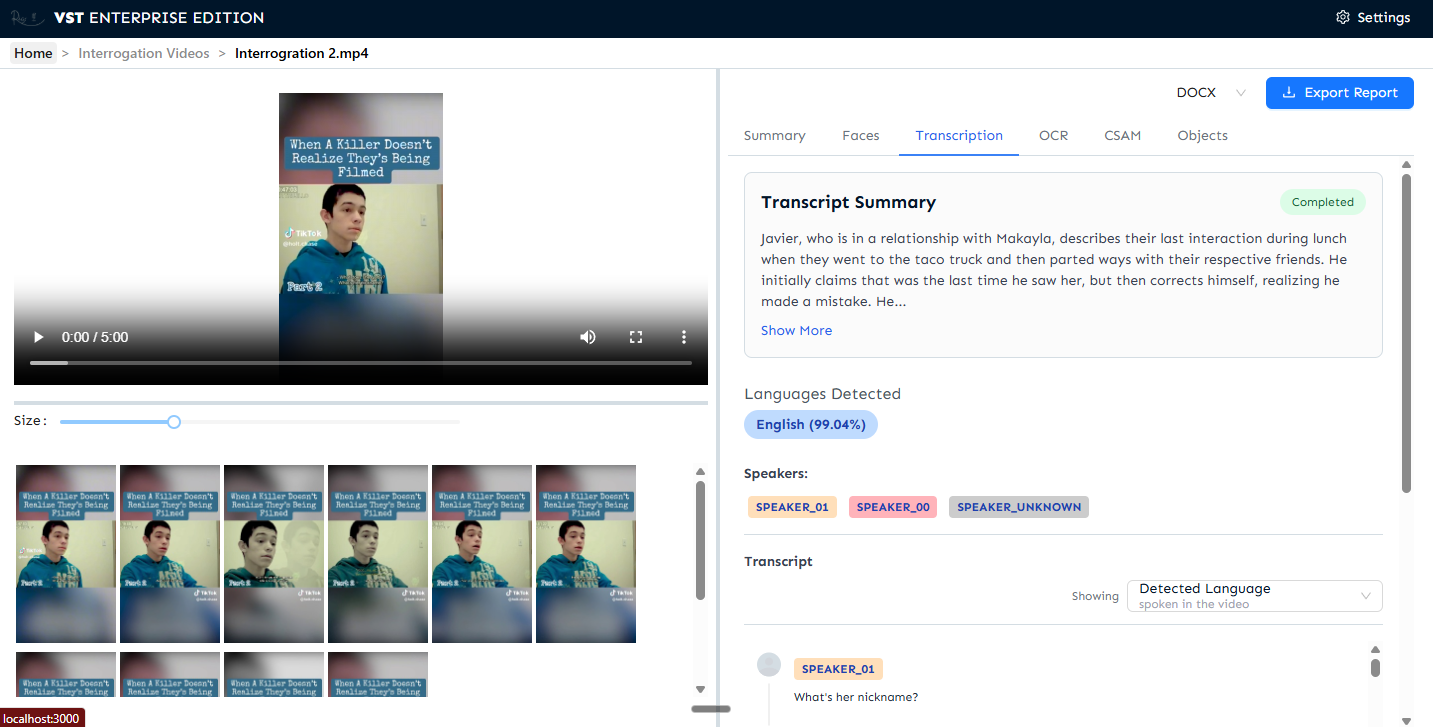

Video navigation and rapid triage

Long videos are segmented into navigable thumbnails linked to timestamps. Hover preview shows a silent thumbnail before opening any file, enabling triage across large seizures.

Transcription and speaker identification

Accurate transcripts from audio and video, with speakers identified and labelled separately. Automatic language detection with translation into the analyst’s working language.

Face detection and extraction

Faces appearing in media are detected, grouped, and presented in a structured gallery for review and export — across video and still images.

Text extraction from video and images

On-screen text is captured and made searchable: documents, signs, phone screens, subtitles, usernames, and overlays.

Automatic entity extraction

People, organisations, locations, and dates are automatically extracted from transcripts and on-screen text — technically, Named Entity Recognition. Entities can be merged, split, relabelled, and exported.

Multi-user workflows

Named user accounts with individual sessions. Bookmarks and annotations are attributed per analyst. Licence enforcement is handled at login. Folder-level panels surface file counts, detected faces, text regions, and processing status across entire folders.

Search, analysis, and reporting

Full-text search across all processed media, filtered by face, entity, keyword, or time. Exportable structured reports for case management and downstream tools.

Enrichment Modules

Additional enrichment modules integrate directly into the platform:

- Age Estimation — highlighting potentially sensitive content involving minors

- Location Estimation (Morrigan) — estimating likely capture locations from visual cues

- Text & Audio Summarisation — rapid triage of transcripts and chat logs

Modules are also available as standalone APIs where required.

Deployment and Control

- On-premise, air-gapped, or deployed to your agency’s cloud

- Fully containerised — runs anywhere containers run, no proprietary infrastructure

- No external data dependency in air-gapped mode

- Data and outputs remain under customer control

Designed to support GDPR, the Law Enforcement Directive (EU 2016/680), and the EU Artificial Intelligence Act.

Engagement

Most organisations deploy the platform as a complete solution, enabling selected modules according to operational need. Individual capabilities can also be deployed independently.